A month after graduation, I'm well on my way to learning all sorts of crazy new things. This summer, I'm learning about...

-

HAM radio. On Tuesday, I attended the first of a summer-long amateur radio FCC licensing class. I know very little about radios and their components - the president of GSFC's amateur radio club told a story about how easy it was to build a circuit to convert 5 volts down to 3.3 volts, and kept throwing out electronics jargon. I'm looking forward to increasing my knowledge of the subject!

-

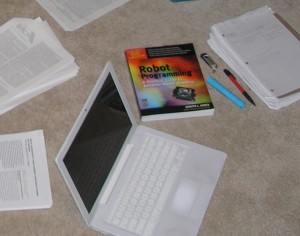

Computer innards. On a similarly technical note, my laptop's hard drive stopped spinning up last week. With the help of a computer engineering friend, I opened up the laptop and replaced the drive. Didn't even lose a screw! It's a small step into the world of computer hardware, but that was the first time I've opened up a computer, so it counts for a lot.

-

Multiple realizability. That is, that people can take entirely different paths to the same place. People with ridiculously different beliefs can still be thinking exactly the same thing at exactly the same time on ridiculously frequent occasions.

-

Tae Kwon Do. An activity I'd never done before: martial arts! All the interns/apprentices in my lab this summer were encouraged to try it out, since the GSFC club is so friendly. We've learned miscellaneous self-defense maneuvers and more ways of kicking than I remember names for - I even got to kick through a board!

-

And software... My lab group is using a variety of software tools and open source code libraries that are new to me: ROS (the Robot Operating System), a code repository via SVN, the MRPT libraries, the point cloud library (PCL), and many more. I'm remembering C++, delving into path planning algorithms, and reading up on SLAM (simultaneous localization and mapping). Yes, it's a whirlwind of acronyms.